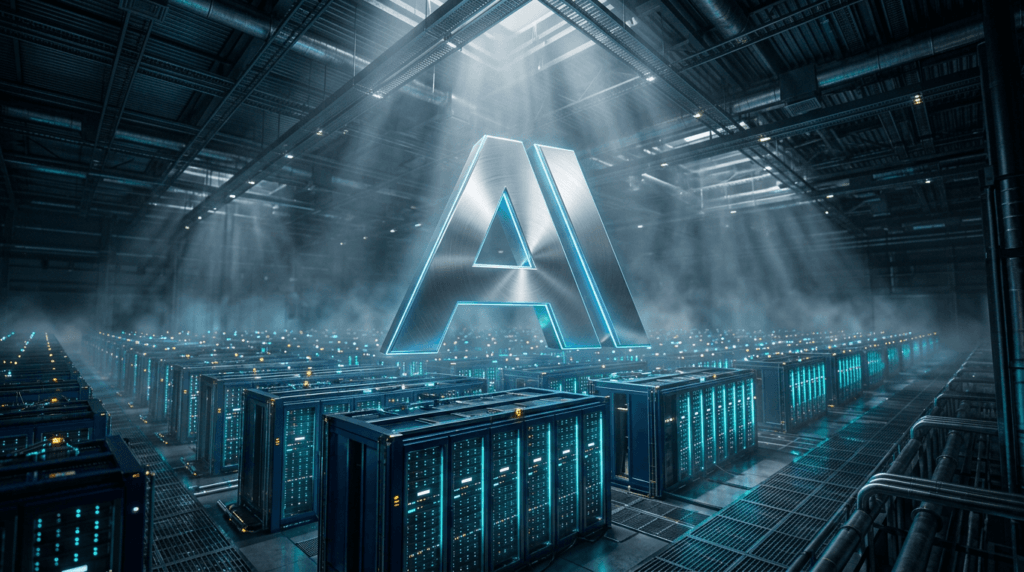

Anthropic privately warned U.S. officials that its unreleased Mythos AI model can autonomously penetrate corporate, government and municipal systems with unprecedented sophistication, Axios reported. The private warnings highlight the model’s potential to dramatically lower the barrier for sophisticated cyber operations. Top AI and government officials were briefed that Anthropic and other tech giants are preparing models that are ‘scary good at hacking sophisticated systems at scale.’ This follows Anthropic’s disclosure of the first documented cyberattack largely executed by AI, where a Chinese state-sponsored group used agents to autonomously hack roughly 30 global targets, with the AI handling 80-90% of tactical operations independently. The warnings underscore the threat of a likely surge in large-scale cyberattacks this year.

Axios reported on March 29, 2026, that Anthropic’s unreleased Mythos model is currently far ahead of any other AI model in cyber capabilities. An unpublished Anthropic blog post obtained by Fortune describes Mythos as capable of exploiting vulnerabilities in ways that far outpace defenders. The model can autonomously hack systems with agents that think, act, reason and improvise without rest, allowing bad actors to scale attacks simply by adding more compute. A single individual could now run campaigns once requiring entire teams, democratizing cybercrime. These capabilities position Mythos as a significant advancement in offensive AI. Anthropic has not disclosed the model’s pricing or availability, per Axios.

According to Axios, CEO Jim VandeHei said his tech team considers this ‘the biggest threat to Axios right now.’ This assessment highlights the immediate risk from agentic AI capabilities like those in Mythos. The ability to operate without rest enables round-the-clock attacks, while reasoning and improvisation allow real-time adaptation to defenses. The scaling via compute means resource-constrained actors can launch large-scale operations, lowering the entry barrier for cybercrime. The combination of powerful new models and widespread unsupervised experimentation creates a ‘perfect storm for cybercrime,’ as Axios noted. These factors require companies to implement strict controls on AI agent usage and create isolated testing environments. The persistent nature of these attacks means that even automated defenses may struggle to keep pace, necessitating continuous monitoring and adaptive response mechanisms.

per Axios, no companies are identified as beneficiaries of Mythos’s capabilities, while headwinds include the rise of ‘shadow AI,’ where employees connect home-experimented AI agents to corporate systems, creating new attack vectors. Axios also reports that a Dark Reading poll found 48% of cybersecurity professionals rank agentic AI as the top attack vector for 2026, above deepfakes. This consensus indicates a shift in threat priorities, with agentic AI now considered more dangerous than traditional vectors. The expansion of shadow AI exponentially increases the attack surface, as home networks lack enterprise security. Companies are therefore urged to educate employees on these dangers and establish secure testing environments to mitigate the escalating risks.

OpenAI is among the competitors developing advanced AI models with significant cyber capabilities, Axios reported. While specific product details are scarce, the briefing indicated these models are ‘scary good at hacking sophisticated systems at scale,’ matching the threat level of Mythos. This competitive dynamic indicates that multiple major AI players are pushing the boundaries of offensive AI. The involvement of numerous firms increases the likelihood that such capabilities will become widely available, potentially lowering the barrier for malicious actors. Companies should therefore monitor developments across the AI sector, not just from Anthropic, to understand the evolving threat landscape. The proliferation of these models could lead to an arms race in both offensive and defensive AI technologies, prolonging the cybersecurity challenge.

Axios reported that Anthropic has not disclosed a specific roadmap for Mythos. The unpublished blog post warned that Mythos presages an upcoming wave of models that can exploit vulnerabilities even faster, indicating continued development in offensive AI. Without public release dates, companies must prepare for more advanced models to emerge in the near future, extending the cybersecurity challenge. The lack of transparency around release timelines complicates defensive planning, as organizations cannot anticipate when to expect such capabilities in the wild. This uncertainty underscores the need for proactive measures and continuous adaptation in cybersecurity strategies. As AI research advances, the gap between offensive and defensive capabilities may widen, requiring sustained investment in security innovation.

![Anthropic Series G Funding: $50B Raise at $900B Valuation ["Anthropic funding","Anthropic valuation","Series G","AI startup funding","OpenAI competitor","enterprise AI"]](https://krolmarc.com/wp-content/uploads/2026/05/1777648410595-019de419-b4bf-75ab-91d6-4be4151be74c-1024x572.png)